For the better part of this year, we’ve been conducting interviews with data teams to help us better understand their data reliability needs, and one issue we keep hearing is about confidence.

Here are a couple of points that often come up:

- Data teams want to be confident that any changes they make won’t break things downstream.

- Data teams want the confidence to be able to say that the data is right, without just eye-balling it.

Stop making changes in production

Even with lack of confidence being such a frequently reported pain point, it’s still common to hear about data engineers making changes to data models in production.

In software development, it would be unthinkable to make changes to production code. So, why is it common to hear of data teams making changes to the data pipeline without fully testing those changes first, and validating that those changes won’t affect downstream usage?

It’s the lack of any testing methodology for model changes that results in uncertainty, in turn affecting the confidence of the team.

Increasing confidence making data model changes

To restore data teams’ confidence in data modeling, we propose a two-tiered approach that involves first creating a staging environment, and second creating a comparison report of the data profile from each environment. By comparing these data profiles, the effect of data model changes can be quickly, and fully, understood. The key is that the process should be automated, to reduce friction, as part of the CI process

1. Staging environment

Data teams need a ‘safe place’ to try things out, away from production, with the knowledge that any data model changes won’t break downstream usage. This is where a staging environment comes in. Automating staging environment creation is now relatively easy as part of dbt cloud.

When a pull request is opened with changes to data modeling code, a staging environment is automatically created as part of the CI process that duplicates the production environment, or a specific state of production data.

2. Data profiling comparison

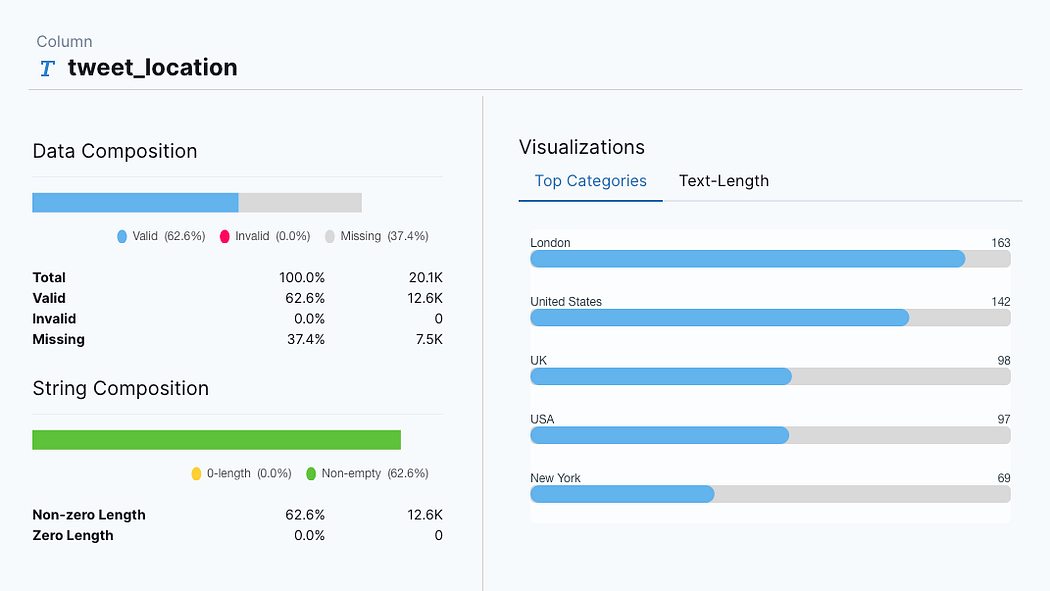

After the staging environment has been generated, the next step involves profiling both the production and staging environments. A data profile provides key metrics about a data source and should be a key part of the ELT data modeling process. Only through data profiling can you fully understand the structure of the data.

Compare data profiles

Here’s where the magic happens — By comparing the production and staging data profiles, the data team can see how the data looked both before and after the data modeling changes. This is often called a ‘diff’ or a ‘delta’.

The comparison of the two data profiles should be automated and then attached to the PR. This PR can then easily be reviewed, with any changes to data profiling metrics clearly defined. Data teams need only review the PR comments, check the data profile report, and if things are as expected commit to main.

Here’s a mock-up of what the profile comparison could look like:

Gone are the days of eye-balling the data itself. Gone are the days of random SQL queries to check things ‘look right’. The data profile diff will show exactly the structure of the data in both previous and modified forms.

Here’s how the process looks before and after implement the staging + profiling comparison steps to the CI process:

Confidence through feedback

It’s difficult to have confidence in a system if there is no feedback that what you’re doing is correct. So, it’s natural that data teams would lack confidence making changes if they don’t know the effect of these changes.

The comparison of data profiles from staging and production provides feedback to data engineers about the changes that have been made. If things don’t look right, then it’s back to the data models to continue working and, by using a staging environment, no issues have had a chance to enter the production environment.

Automating the habit

Humans are creatures of habit, so changing the way something is done needs to be easy to implement, recreate, and have a clear benefit/reward for doing it. Only when these three factors are satisfied will teams be willing to adopt the process.

Building the staging environment creation and data profile generation into the CI process means that minimal changes are required to existing processes. The automated nature of CI means there is minimal effort to continue the process, and the data profile brings increased insight, and subsequently confidence, into making data model changes.

Conclusion

We feel this process can effectively increase confidence for data teams by pairing the safety net of staging, with the increased knowledge provided by data profiling and comparing profiles of different states of data. We’re currently working on implementing this process for use with dbt and PipeRider for data profiling.

Which process are you currently using for reviewing data model changes before they go to production?

What do you run, or do, after model changes to make sure the data looks ‘normal’?

InfuseAI is solving data quality issues

InfuseAI makes PipeRider, the open-source data reliability CLI tool that adds data profiling and assertions to data warehouses such as BigQuery, Snowflake, Redshift and more. Data profile and data assertion results are provided in an HTML report each time you run PipeRider.

- Github: PipeRider

- Twitter: @infuseai

- Mastodon: @piperider@fosstondon.org

This blog has been republished by AIIA. To view the original article, please click HERE.

Recent Comments